You’ll identify workflow bottlenecks in AI platforms by mapping every step from data ingestion to deployment, then measuring delays at handoff points between systems. Track API response times, monitor 429 status codes for rate limits, and log timestamps at data transfer points. Fix bottlenecks by replacing synchronous calls with asynchronous patterns, automating low-risk approval steps, and implementing token bucket throttling for rate-limited services. Set threshold-based alerts at 70% and 85% capacity to catch issues before they cascade. The sections below break down each diagnostic technique and solution.

Map Your Complete AI Workflow Before Diagnosing Bottlenecks

Before you can identify what’s slowing down your AI platform, you need a clear picture of how work actually flows through your system. Start by documenting every step – from data ingestion to model deployment. Don’t rely on assumptions or outdated diagrams. Walk through your actual processes and capture what’s really happening.

Break free from guesswork by tracking where data moves, who touches it, and how long each stage takes. Identify handoffs between teams, automated processes, and manual interventions. You’ll discover hidden dependencies you didn’t know existed.

This mapping exercise reveals your workflow’s true nature. You can’t optimise what you don’t understand. Once you’ve got this foundation, you’ll pinpoint bottlenecks with precision instead of wasting time fixing symptoms.

Identify Workflow Bottlenecks in Data Handoff Points

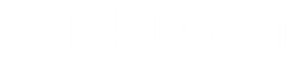

Data handoff points between different AI platform components are where bottlenecks most frequently occur. You’ll need to monitor data transfer delays by measuring the time elapsed between when one system sends data and when another receives it. Focus your analysis on API integration points where mismatched data formats, rate limits, or synchronisation issues can create significant slowdowns.

Monitor Data Transfer Delays

Where exactly does your AI workflow slow down when information moves between systems? You’ll need real-time monitoring tools to capture these delays. Instal logging mechanisms at each handoff point – where data exits one service and enters another. Track timestamps, payload sizes, and network latency.

Set up dashboard alerts when transfer times exceed your baselines. You’re looking for patterns: Does data crawl during peak hours? Are certain endpoints consistently sluggish? Do larger datasets trigger disproportionate delays?

Don’t just collect metrics – analyse them. Compare actual transfer speeds against your infrastructure’s theoretical capacity. The gap reveals bottlenecks you can eliminate. Use distributed tracing to follow individual requests across your entire pipeline, exposing hidden slowdowns that aggregate monitoring misses.

Analyse API Integration Points

API integration points create friction that monitoring alone can’t solve. You’ll find bottlenecks where systems exchange data – authentication layers, payload transformations, and rate-limited endpoints. Map every handoff point between your AI workflows and external services. Don’t accept vendor promises; verify actual performance.

| Integration Point | Common Bottleneck |

|---|---|

| Authentication APIs | Token refresh delays |

| Data transformation | Schema validation overhead |

| Third-party services | Rate limiting constraints |

Break free from assumptions about “seamless” integrations. Test each connection under production loads, measuring latency at every boundary. You control optimisation when you understand where data stalls. Replace synchronous calls with async patterns where possible. Cache authentication tokens aggressively. Your platform’s speed depends on eliminating these hidden delays.

Find Processing Bottlenecks in Multi-Step AI Sequences

When you’re troubleshooting sluggish AI workflows, you’ll often discover that a single stage in your pipeline is silently sabotaging the entire sequence. Start by measuring execution time for each step independently. You’ll want to instrument your code with timestamps at every transformation point, model inference, and data handoff.

Look for memory bottlenecks where data serialisation creates unnecessary delays. Check if you’re repeatedly loading models instead of keeping them warm. Examine queue depths between stages – backed-up queues reveal overwhelmed components.

Don’t assume your GPU is the culprit. Often, it’s preprocessing tasks running on CPU or database queries blocking your flow. Profile systematically, isolate the constraint, and you’ll break free from mysterious slowdowns that’ve been holding your system hostage.

Fix API Rate Limits Slowing Your Automation Pipeline

API rate limits can bring your automation pipeline to a crawl if you’re not managing them effectively. You’ll need to identify which services impose the strictest constraints, then implement throttling strategies that distribute requests within allowable thresholds. By continuously monitoring your API usage patterns, you can optimise your workflow to maximise throughput without triggering rate limit errors.

Identify Rate Limit Constraints

Before you can resolve performance issues in your automation pipeline, you’ll need to pinpoint exactly where rate limits are throttling your requests. Start by monitoring your API response headers – they’ll reveal current usage, remaining quota, and reset times. Track 429 status codes that signal you’ve hit the ceiling.

Use logging tools to capture request timestamps and identify patterns. Are you overwhelming specific endpoints during peak hours? Run diagnostic tests at different intervals to measure throughput.

Check your provider’s documentation for tier-specific limits on requests per second, minute, or day. Compare these against your actual usage metrics. You’ll often discover that certain operations consume more quota than others, revealing optimisation opportunities that free your workflow from unnecessary constraints.

Implement Request Throttling Strategies

Once you’ve mapped your rate limit constraints, you’ll need a systematic approach to control request flow. Throttling empowers you to break free from API restrictions while maintaining momentum. You’re taking control instead of letting platforms dictate your pace.

Implement these proven strategies:

- Token bucket algorithm: Accumulate request tokens over time, spending them when needed for burst capacity

- Exponential backoff: Automatically retry failed requests with increasing delays to avoid cascade failures

- Request queuing: Stack incoming requests and despatch them at sustainable intervals

- Priority-based routing: Assign critical operations higher precedence while batching lower-priority tasks

Configure your throttling layer to adapt dynamically based on real-time API responses. You’ll transform chaotic request patterns into smooth, predictable workflows that maximise throughput without triggering penalties.

Monitor and Optimise Usage

Real-time visibility into your API consumption patterns separates efficient pipelines from constantly broken ones. You’ll break free from reactive firefighting when you track metrics that actually matter. Deploy dashboards monitoring request volume, error rates, and latency across endpoints. Set alerts before you hit limits – not after your pipeline crashes.

| Metric | Action Threshold |

|---|---|

| Rate limit utilisation | 70% of quota |

| Error rate spike | 5% increase in 5 min |

| Response latency | 2x baseline average |

| Queue depth | 1000 pending requests |

| Cost per operation | 20% above target |

Analyse patterns weekly. Identify which operations consume resources unnecessarily. Eliminate redundant calls, cache responses aggressively, and batch requests strategically. You’re not optimising for perfection – you’re optimising for autonomy and uninterrupted execution.

Remove Manual Approval Steps Blocking Workflows

Manual approval steps create significant friction in AI workflows, forcing systems to pause while waiting for human sign-off on routine decisions. You’re fundamentally handcuffing your AI platform’s potential when you require approvals for predictable, low-risk tasks.

Automate decisions that don’t need human judgement. Establish clear thresholds where AI can operate independently:

- Data processing requests under specific size limits

- Standard model deployments following pre-approved configurations

- Routine resource allocations within budget parameters

- Regular reporting outputs matching established templates

Reserve manual approvals exclusively for high-stakes scenarios like production releases affecting critical systems or budget changes exceeding predetermined limits. You’ll accelerate workflows dramatically while maintaining necessary oversight where it actually matters. Configure your platform to distinguish between scenarios requiring human intervention and those that don’t.

Adjust Trigger Frequency to Prevent Queue Overload

When triggers fire too frequently, they flood your AI platform’s processing queues faster than the system can handle them, creating cascading delays that paralyse workflows.

You’re in control of trigger frequency. Evaluate each trigger’s necessity and adjust intervals based on actual processing capacity. If you’re checking for updates every minute but processing takes five, you’re building a backlog that’ll crush performance.

Match trigger intervals to actual processing capacity – checking every minute when processing takes five guarantees a performance-crushing backlog.

Implement rate limiting to cap how many triggers execute simultaneously. Set realistic intervals that match your system’s throughput rather than arbitrary schedules.

Monitor queue depth continuously. When queues exceed healthy thresholds, increase trigger intervals or add processing resources. You can’t liberate workflows while drowning them in unnecessary executions.

Balance responsiveness with sustainability. Your platform should serve you efficiently, not collapse under self-imposed pressure.

Calculate What Workflow Bottlenecks Cost Your Platform

Every delayed workflow bleeds money from your operation through wasted compute resources, lost productivity, and missed opportunities. You’re paying for infrastructure that sits idle while tasks queue up, then overloads when everything processes simultaneously. Calculate your true costs by tracking these metrics:

- Compute waste: Idle GPU hours multiplied by hourly rates

- Developer time: Hours spent debugging bottlenecks at their salary cost

- Customer churn: Revenue lost from users who abandon slow processes

- Opportunity cost: Deals you couldn’t close due to delayed outputs

Break free from these hidden drains. Pull your platform’s queue metrics, multiply blocked task hours by your resource costs, and add developer intervention time. That’s your baseline – the minimum you’re losing monthly to inefficiency that you can immediately reclaim.

Set Up Alerts to Catch Future Workflow Bottlenecks

Your platform can’t fix bottlenecks you don’t see happening in real-time. Break free from reactive firefighting by implementing proactive monitoring that catches problems before they escalate.

Configure threshold-based alerts for critical metrics: processing queue depth, API response times, GPU utilisation rates, and memory consumption. Set tiered warnings – yellow alerts at 70% capacity, red at 85% – giving you breathing room to act.

Don’t drown in noise. Filter alerts by severity and business impact. You need actionable intelligence, not constant notifications.

Use automated anomaly detection to spot unusual patterns your static thresholds might miss. Machine learning models can identify subtle degradation trends that signal emerging bottlenecks.

Route alerts to the right teams instantly through Slack, PagerDuty, or your preferred channels. Speed matters when preventing small issues from becoming platform-wide failures.